Introduction

When we were building the Miru Agent and SDK for our configuration management system, we hit an early design decision: how should these two components talk to each other?

Both would run on our customers’ robots, the Agent as a systemd service, and the SDK integrated into their application code. That meant we had to be fast and lightweight. Our customers are running large models on constrained edge computers. They don’t have room for unnecessary CPU overhead or bloated dependencies.

We’ve spent years building SaaS, so our first instinct was familiar: spin up a REST API on localhost, expose a few endpoints, and call it a day.

But when we dug deeper into the requirements, that approach didn’t hold up. We didn’t want the overhead of the full TCP/IP stack, managing ports, serialization, or localhost to monitor.

And that’s how we landed on Unix sockets.

In this blog, we’ll explore what Unix sockets are, how they work, and why they work wonderfully for Miru’s architecture!

What is a Socket?

Before diving into Unix sockets, let’s zoom out a layer above. What are sockets?

A socket is an endpoint for communication between two programs. You can think of it as a virtual pipe that allows us to send and receive data, either over a network or locally.

At the OS level, a socket is just a file descriptor, which is the same abstraction that Unix uses for files, and standard input (stdin)/output (stdout) streams. In Unix, you read() and write() to a file. You do the same with a socket.

The abstractions from the programmer's perspective are similar, but the striking difference is that sockets are ‘active’.

When you write to a file, it’s saved to disk. Another program can open it and read the data later. A socket, on the other hand, is a live, bidirectional channel. Both sides can send and receive data in real time.

Where Are Sockets Used?

Sockets are ideal when you need to live, two-way channel between ‘components’ (machines, containers, or processes).

You’ll find them everywhere:

Client/server architecture

When your browser loads a webpage, it opens a socket to the web server. In fact, you're reading this blog through one right now!Inter-process communication (IPC) on the same device

Many core Linux services, likejournaldandsystemdtalk to each other using Unix domain sockets.Remote procedure calls (RPC) between distributed systems

gRPC, for example, uses sockets under the hood to call functions on remote machines as if they were local.Streaming systems

Sockets are used to stream tokens from an LLM inference server to an application like ChatGPT.

If their power and ubiquity haven’t convinced you yet, let’s dive into why you should be using sockets in your systems.

Why Should I Be Using Sockets?

Why should you?

Well, If you're communicating over a network, you don't have much of a choice. Protocols like HTTPS, gRPC, and WebSockets all rely on TCP sockets under the hood, which is the default layer.

But when you’re working with local communication, you do have options. Unix domain sockets are often the best choice, but there are other inter-process communication (IPC) mechanisms too. Let's walk through the main ones:

Alternatives:

1. Pipes

Type: Unidirectional stream

How it works: One process writes to one end, another reads from the other. It’s a one-way channel.

Example:

stdoutpiped into another process via|in a shell.

It’s simple to set up, and great for chaining processes.

2. Message Queues (POSIX or System V)

Type: FIFO messaging system.

How it works: One process puts a message into the queue, and another takes it out. Messages can be tagged with IDs or metadata.

It’s useful for structured communication and deferred processing. However, it requires a lot of customization: you’ll need to avoid stale messages or overflows.

3. File Watchers (e.g., inotify on Linux)

Type: File system-based signaling.

How it works: One process writes to a file. Another watches the file or directory for changes.

These are easy to understand and set up, but not suited for real-time or high-frequency data exchange.

Unix sockets hit the sweet spot. Compared to other local IPC methods, sockets:

Support full duplex communication (vs. pipes)

Require less setup and system configuration (vs. message queues)

Are faster and more reliable (vs. file watchers)

How Do Sockets Work?

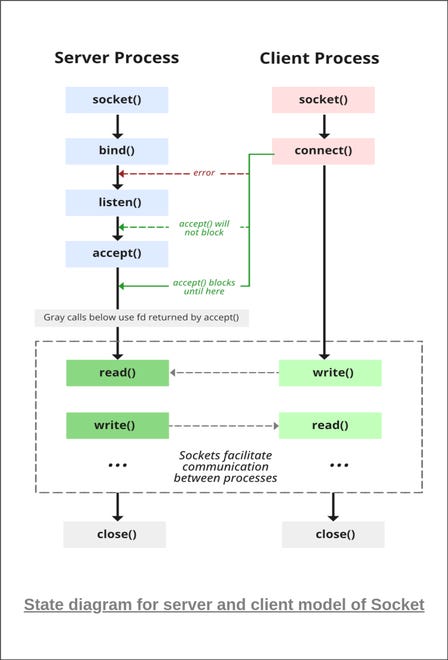

Let’s dive into the full lifecycle, from setup to close. Let’s walk through it from both the server and client perspectives.

Server

The server initially sets up the socket, announces its presence, and waits for incoming connections.

socket()First, the server creates a socket using the

socket()system call. This returns a file descriptor that represents the socket in future operationsbind()This binds the socket to a known address.

For TCP, that’s an IP address and port

For Unix sockets, it’s a file path like /run/miru/miru.sock.

listen()The socket is marked as passive, which means it is ready to accept incoming connections. Now, the server “starts listening.”

accept()The call is blocked until a client connects. Once it does:

The OS creates a new socket specifically for that connection (this is a 1:1 socket relationship)

The original socket continues to listen for new clients

read()/write()Now, the server can send and receive data with the client using I/O system calls.

close()When the session is done, the socket is closed.

Client

The client initiates the connection to the server.

socket()Same as the server, the client creates a socket and gets back a file descriptor.

connect()Attempts to connect to the server’s address (IP + port or file path). If the server is listening, the OS establishes the connection between them.

read()/write()The client can now send and receive data, using the same system calls as the server.

close()Once done, it closes the socket.

Whether it’s TCP or Unix, the OS is responsible for managing the actual connection. It routes data between processes, handles buffering, and enforces permissions. For TCP, the OS also manages the networking stack.

Unix vs. TCP Sockets

Now that we understand how sockets work, we can comb through the differences between Unix (local) and TCP (over network).

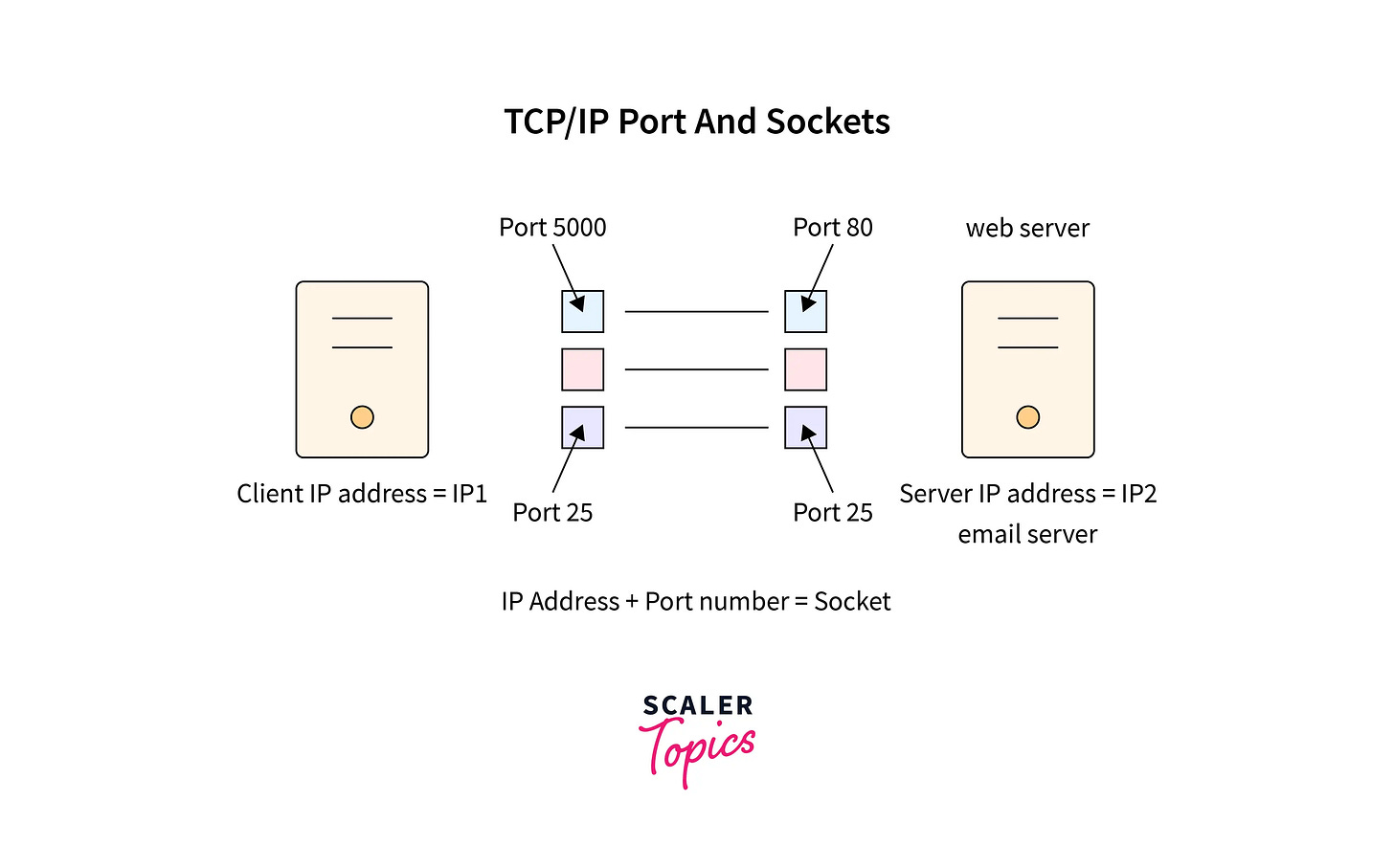

TCP Sockets

When a TCP connection is established:

The client calls

connect()and specifies the server’s IP and a port (e.g.127.0.0.1:8080)The server has already called

bind()andlisten(),and is waiting on the specified port.The OS handles the TCP/IP Stack

It performs the handshake (SYN → SYN ACK → ACK)

The stack also ensures that all data (which is broken into packets) is delivered in order, without loss, and without duplication.

At the application layer, all of this is abstracted into a simple file descriptor.

Let’s look at how we’d initialize a basic IPv6 TCP server and client in C++ using raw socket APIs.

Server

#include <iostream>

#include <cstring>

#include <unistd.h>

#include <arpa/inet.h>

#include <netinet/in.h>

int main() {

int server_fd = socket(AF_INET6, SOCK_STREAM, 0);

if (server_fd < 0) {

perror("socket failed");

return 1;

}

sockaddr_in6 addr;

std::memset(&addr, 0, sizeof(addr));

addr.sin6_family = AF_INET6;

addr.sin6_addr = in6addr_any; // Listen on all IPv6 interfaces

addr.sin6_port = htons(8080); // Port 8080

if (bind(server_fd, (sockaddr*)&addr, sizeof(addr)) < 0) {

perror("bind failed");

return 1;

}

if (listen(server_fd, 5) < 0) {

perror("listen failed");

return 1;

}

std::cout << "Server listening on port 8080 (IPv6)" << std::endl;

sockaddr_in6 client_addr;

socklen_t client_len = sizeof(client_addr);

int client_fd = accept(server_fd, (sockaddr*)&client_addr, &client_len);

if (client_fd < 0) {

perror("accept failed");

return 1;

}

char buffer[1024] = {0};

read(client_fd, buffer, sizeof(buffer));

std::cout << "Received: " << buffer << std::endl;

const char* reply = "Hello from server!";

send(client_fd, reply, strlen(reply), 0);

close(client_fd);

close(server_fd);

return 0;

}

We can see that the server is using AF_INET6 to tell the OS to use IPv6 sockets and SOCK_STREAM to ensure lossless, ordered delivery.

It uses bind() to associate the socket with the specified IP address and port. Then it uses listen() and accept() to prepare itself to receive connections.

Client

#include <iostream>

#include <cstring>

#include <unistd.h>

#include <arpa/inet.h>

#include <netinet/in.h>

int main() {

int sock = socket(AF_INET6, SOCK_STREAM, 0);

if (sock < 0) {

perror("socket failed");

return 1;

}

sockaddr_in6 addr;

std::memset(&addr, 0, sizeof(addr));

addr.sin6_family = AF_INET6;

inet_pton(AF_INET6, "::1", &addr.sin6_addr); // Connect to localhost (::1)

addr.sin6_port = htons(8080);

if (connect(sock, (sockaddr*)&addr, sizeof(addr)) < 0) {

perror("connect failed");

return 1;

}

const char* msg = "Hello from client!";

send(sock, msg, strlen(msg), 0);

char buffer[1024] = {0};

read(sock, buffer, sizeof(buffer));

std::cout << "Received from server: " << buffer << std::endl;

close(sock);

return 0;

}

The client uses connect() to establish a connection with the server.

From there, both the client and server use send() and read() to exchange data.

Finally, when they are done communicating, they close() the socket.

Unix Sockets

Unix sockets, also called Unix domain sockets, are used when two programs need to talk on the same machine. They’re best for fast, secure IPC.

To establish a Unix socket:

The server calls

bind()with a file path (/run/miru/miru.sock).The client calls

connect()using that same path.The kernel routes the data directly between the processes with no TCP/IP overhead.

Unlike TCP, Unix sockets transfer data directly through memory buffers. There’s no serialization/deserialization associated with TCP/IP

The socket can be permissioned using standard Unix tools like

chmodorchownData is exchanged with

read()andwrite()

Note that there are two types of Unix sockets:

Stream sockets (STREAM_SOCK) behave like TCP sockets. They ensure that data is transmitted through a reliable, ordered stream.

Datagram sockets (SOCK_DGRAM), like UDP, have no built-in ordering or delivery guarantees.

Like TCP, we’ll use socket(), bind(), listen(), and accept() on the server side, but instead of binding to an IP and port, we bind to a file path.

Server

#include <iostream>

#include <cstring>

#include <unistd.h>

#include <sys/socket.h>

#include <sys/un.h>

#define SOCKET_PATH "/tmp/miru.sock"

int main() {

int server_fd = socket(AF_UNIX, SOCK_STREAM, 0);

if (server_fd < 0) {

perror("socket failed");

return 1;

}

sockaddr_un addr;

std::memset(&addr, 0, sizeof(addr));

addr.sun_family = AF_UNIX;

std::strncpy(addr.sun_path, SOCKET_PATH, sizeof(addr.sun_path) - 1);

unlink(SOCKET_PATH); // Delete existing socket file if present

if (bind(server_fd, (sockaddr*)&addr, sizeof(addr)) < 0) {

perror("bind failed");

return 1;

}

if (listen(server_fd, 5) < 0) {

perror("listen failed");

return 1;

}

std::cout << "Server listening on " << SOCKET_PATH << std::endl;

int client_fd = accept(server_fd, nullptr, nullptr);

if (client_fd < 0) {

perror("accept failed");

return 1;

}

char buffer[1024] = {0};

read(client_fd, buffer, sizeof(buffer));

std::cout << "Received: " << buffer << std::endl;

const char* reply = "Hello from Unix socket server!";

send(client_fd, reply, strlen(reply), 0);

close(client_fd);

close(server_fd);

unlink(SOCKET_PATH);

return 0;

}

AF_UNIX tells the OS that this is a Unix domain socket. We’re still using a SOCK_STREAM socket type.

Client

#include <iostream>

#include <cstring>

#include <unistd.h>

#include <sys/socket.h>

#include <sys/un.h>

#define SOCKET_PATH "/tmp/miru.sock"

int main() {

int sock = socket(AF_UNIX, SOCK_STREAM, 0);

if (sock < 0) {

perror("socket failed");

return 1;

}

sockaddr_un addr;

std::memset(&addr, 0, sizeof(addr));

addr.sun_family = AF_UNIX;

std::strncpy(addr.sun_path, SOCKET_PATH, sizeof(addr.sun_path) - 1);

if (connect(sock, (sockaddr*)&addr, sizeof(addr)) < 0) {

perror("connect failed");

return 1;

}

const char* msg = "Hello from Unix socket client!";

send(sock, msg, strlen(msg), 0);

char buffer[1024] = {0};

read(sock, buffer, sizeof(buffer));

std::cout << "Received from server: " << buffer << std::endl;

close(sock);

return 0;

}

Performance is higher, and the overhead is lower because there’s no networking stack involved.

But as we’ll see, there are tradeoffs between Unix and TCP sockets.

Tradeoffs Between Unix and TCP Sockets

From an engineer’s perspective, interacting with a Unix socket vs a TCP socket looks the same. But under the hood, they have some important differences.

Speed & Performance

Unix sockets are often 3-10x faster than TCP sockets because they avoid the entire networking stack.

We’ve discussed the TCP/IP stack (handshakes, packetization, and congestion control) that causes overhead for sending data.

Based on benchmarks, for the same connection, a Unix socket round trip takes 1-5 microseconds, whereas a TCP socket round trip takes 15-30 microseconds.

Although microseconds are minuscule, with real-time systems running thousands of processes per second, like robots, these operations can add up.

Security

Unix sockets inherit file-level permissions from the host system:

You can use

chmod/chownto lock access down to a user or group.No open ports means no external actors can attack your robot

If you’re an operating in a firewalled environment, you won’t face any trouble

TCP sockets, on the other hand, live in the network namespace, even if bound to localhost. That means:

They’re exposed to firewall rules.

You must secure them with TLS or socket-level ACLs if you care about hardening.

You can build in fine-grained control like mTLS

Setup Effort

TCP sockets require more effort to integrate. You’ll need to choose and expose a port. You’ll have to handle IP binding, firewalls, and TLS setups.

With Unix, you just have to bind to a file path, and that’s it!

Something we’ve seen teams do during their development cycle is to start with Unix sockets early in the dev cycle to allow for fast iteration, but once you’re ready to productionize, you can take the time to set up a TCP socket.

Flexibility

This is where TCP wins.

Unix sockets only work within the same host. You can't use them across machines or even across containers unless you explicitly share the socket file.

TCP sockets are universal. They work across:

Physical hosts

Containers

Pods in Kubernetes clusters

If you ever need remote communication, TCP is your only option.

Tooling & Ecosystem

TCP is the default for most networking tools and protocols. Therefore, it has a massive ecosystem.

Encryption: TLS/mTLS with OpenSSL, etc.

Load balancing: Built-in support with Envoy, HAProxy, and NGINX.

Monitoring: Works out of the box with Wireshark,

tcpdump, and Prometheus exporters.Debugging: Easy to probe with

curl,telnet,netcat, etc.

Unix sockets are simpler, so the tooling is simpler too. Since there’s no networking stack involved, you can debug with basic Unix tools:

Use

ss -xorlsofto see which processes have the socket open.Use

socatornc -Uto send test messages through the socket.

How Miru Uses Sockets

Back to our original problem statement.

When we were architecting Miru, we knew that we needed the Miru Agent and SDK to communicate with each other quickly, securely, and reliably.

The Miru Agent is an open-source, systemd service that runs on the customer’s robot. It’s responsible for identifying and pulling the latest version of the config.

The Miru SDK is also open-source and is currently supported in C++, with Python and Rust coming soon. It’s used in our customer’s application code.

Whenever there’s a new config available, the Agent needs to notify the SDK immediately so that the application can consume it without delay.

Why We Chose Unix Sockets

We had three core requirements: speed, security, and simplicity.

⚡ Speed & Low Overhead

Our customers run heavy workloads on resource-constrained edge computers (think large PyTorch models running on a Jetson board). They were concerned with not adding any bloat to their system.

This meant that adding a localhost server or sending data through the networking stack was overhead we couldn’t afford.

With Unix, we could move data with blazing speed with almost zero CPU overhead.

🔒 Security

Robots are safety-critical systems. If they go ‘rouge’ they have the potential to endanger people, facilities, and cause critical damage.

Unix sockets gave us a secure channel scoped only to the local machine. There are no ports open or external exposure. And with Unix permissions, we can restrict access so that Miru Agent can only read/write to certain file paths.

Combine that with the fact that our Agent and SDK are fully open-source, and customers have complete visibility and confidence over what is running on their device.

🪶 Simplicity

We wanted to ship fast, and that meant we wanted our setup to be as functional and straightforward as possible!

With a Unix socket, we could bind to /run/miru/miru.sock . This made the implementation lightweight, minimal, and easy to reason about.

A Unix socket was the right fit between our Agent and SDK. It gave us the performance to run on our customers’ devices, met their security standards, and provided the simplicity we needed to ship fast.

Conclusion

We’ve covered what Unix sockets are, how they work, and why they’re so powerful in robotics and embedded systems.

We built the Miru Agent and SDK for fast, secure, and efficient on-device communication. If you’re building similar software with tight constraints, be sure to give Unix sockets a try!

And if you’re looking for a configuration management tool built to be highly performant, check out Miru.

sick stuff